Internet infrastructure firm Cloudflare is launching a suite of tools that could help shift the power dynamic between AI companies and the websites they crawl for data. Today it’s giving all of its customers—including the estimated 33 million using its free services—the ability to monitor and selectively block AI data-scraping bots.

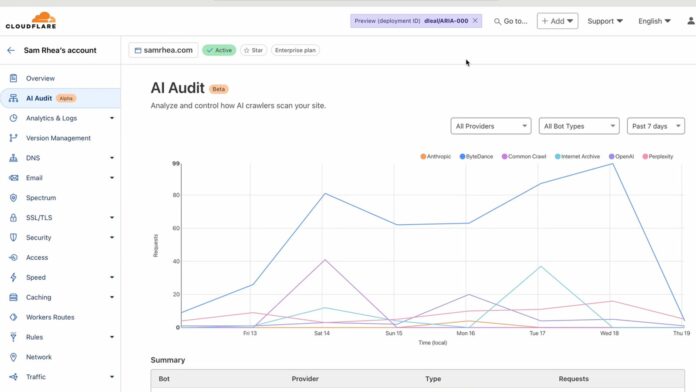

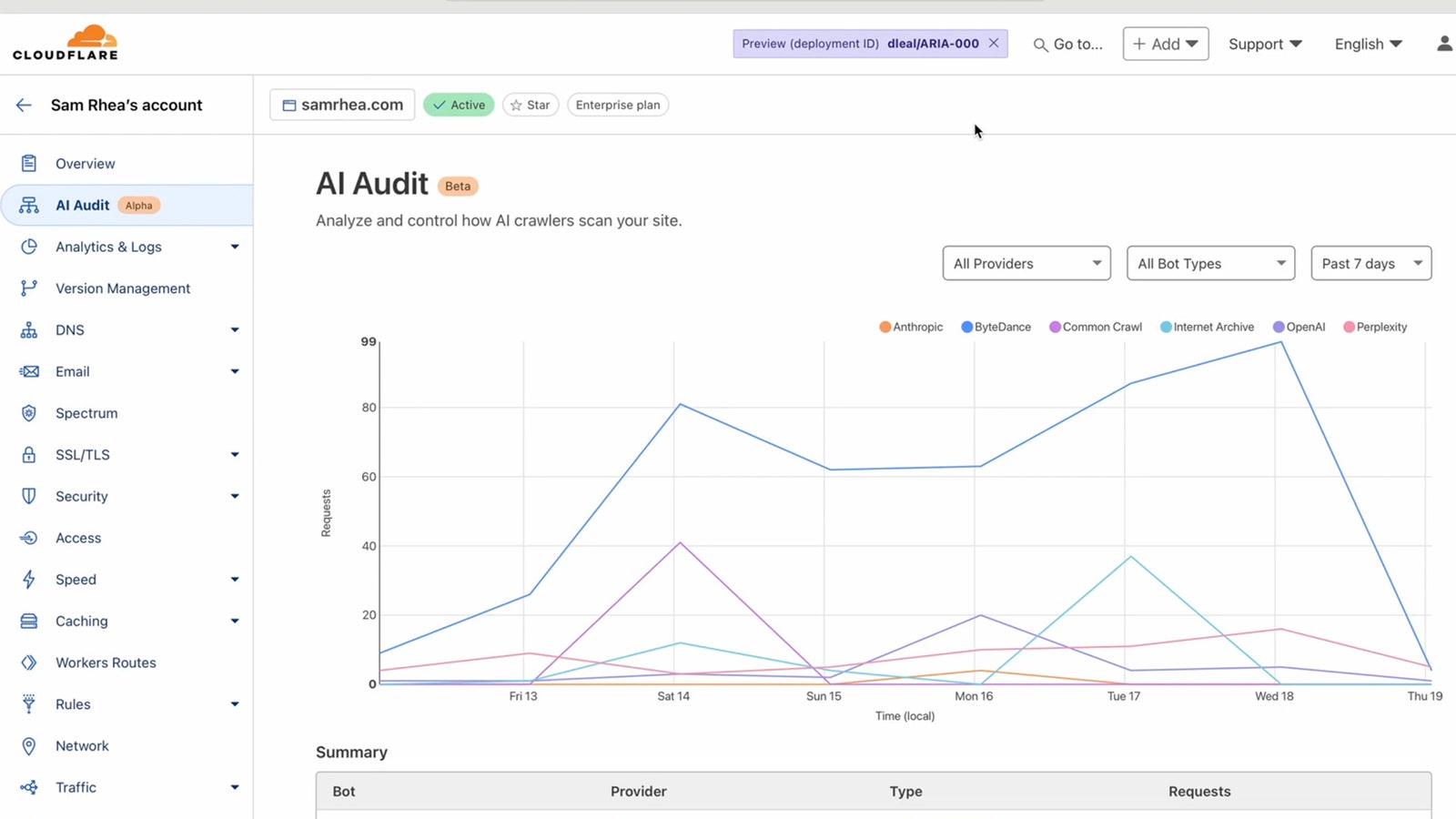

That preventative measure comes in the form of a suite of free AI auditing tools it calls Bot Management, the first of which allows real-time bot monitoring. Customers will have access to a dashboard showing which AI crawlers are visiting their websites and scraping data, including those attempting to camouflage their behavior.

“We’ve labeled all the AI crawlers, even if they try to hide their identity,” says Cloudflare cofounder and CEO Matthew Prince, who spoke to WIRED from the company’s European headquarters in Lisbon, Portugal, where he’s been based the past few months.

Cloudflare has also rolled out an expanded bot-blocking service, which gives customers the option to block all known AI agents, or block some and allow others. Earlier this year, Cloudflare debuted a tool that allowed customers to block all known AI bots in one go; this new version offers more control to pick and choose which bots they want to block or permit. It’s a chisel rather than a sledgehammer, increasingly useful as publishers and platforms strike deals with AI companies that allow bots to roam free.

“We want to make it easy for anyone, regardless of their budget or their level of technical sophistication, to have control over how AI bots use their content,” Prince says. Cloudflare labels bots according to their functions, so AI agents used to scrape training data are distinguished from AI agents pulling data for newer search products, like OpenAI’s SearchGPT.

Websites typically try to control how AI bots crawl their data by updating a text file called Robots Exclusion Protocol, or robots.txt. This file has governed how bots scrape the web for decades. It’s not illegal to ignore robots.txt, but before the age of AI it was generally considered part of the web’s social code to honor the instructions in the file. Since the influx of AI-scraping agents, many websites have attempted to curtail unwanted crawling by editing their robots.txt files. Services like the AI agent watchdog Dark Visitors offer tools to help website owners stay on top of the ever-increasing number of crawlers they might want to block, but they’ve been limited by a major loophole: unscrupulous companies tend to simply ignore or evade robots.txt commands.

According to Dark Visitors founder Gavin King, most of the major AI agents still abide by robots.txt. “That’s been pretty consistent,” he says. But not all website owners have the time or knowledge to constantly update their robots.txt files. And even when they do, some bots will skirt the file’s directives: “They try to disguise the traffic.”

Prince says Cloudflare’s bot-blocking won’t be a command that this kind of bad actor can ignore. “Robots.txt is like putting up a ‘no trespassing’ sign,” he says. “This is like having a physical wall patrolled by armed guards.” Just as it flags other types of suspicious web behavior, like price-scraping bots used for illegal price monitoring, the company has created processes to spot even the most carefully concealed AI crawlers.

Cloudflare is also announcing a forthcoming marketplace for customers to negotiate scraping terms of use with AI companies, whether it involves payment for using content or bartering for credits to use AI services in exchange for scraping. “We don’t really care what the transaction is, but we do think that there needs to be some way of delivering value back to original content creators,” Prince says. “The compensation doesn’t have to be dollars. The compensation can be credit or recognition. It can be lots of different things.”

There’s no set date to launch that market, but even if it rolls out this year it will be joining an increasingly crowded field of projects intended to facilitate licensing agreements and other permissions arrangements between AI companies, publishers, platforms, and other websites.

What do the AI companies make of this? “We’ve talked to most of them, and their reactions have ranged from ‘this makes sense and we’re open’ to ‘go to hell,’” says Prince. (He wouldn’t name names, though.)

The project has been fairly quick-turnaround. Prince cites a conversation with Atlantic CEO (and former WIRED editor in chief) Nick Thompson as inspiration for the project; Thompson had discussed how many different publishers had encountered surreptitious web scrapers. “I love that he’s doing it,” Thompson says. If even big-name media organizations struggled to deal with the influx of scrapers, Prince reasoned, independent bloggers and website owners would have even more difficulty.

Cloudflare has been a leading web security firm for years, and it provides a large portion of the infrastructure holding up the web. It has historically remained as neutral as possible about the content of the websites its services; on the rare occasions it made exceptions to that rule, Prince has emphasized that he doesn’t want Cloudflare to be the arbiter of what’s allowed online.

Here, he sees Cloudflare as uniquely positioned to take a stand. “The path we’re on isn’t sustainable,” Prince says. “Hopefully we can be a part of making sure that humans get paid for their work.”

Source : Wired